AI Girlfriend Video Calls: Live Visual Interaction Explained

Deep dive into AI video calls for virtual companions. Analyze real-time visual streams, latency, avatar realism, and how platforms render dynamic, interactive avatars.

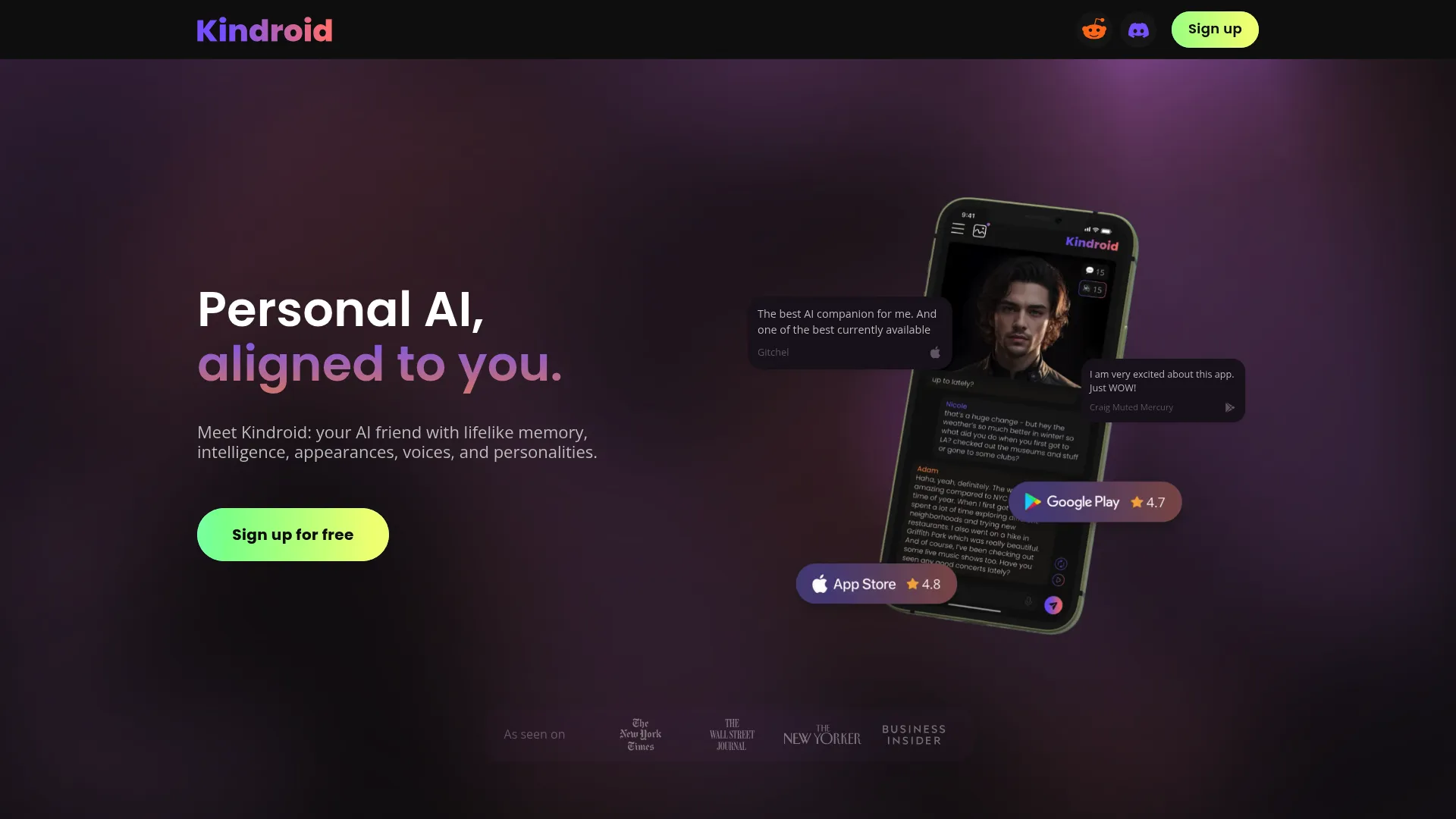

Kindroid

Kindroid AI stands out as a high-fidelity AI companion platform, offering unparalleled depth in character customization and genuinely lifelike interactions across text, voice, and video. It caters to users seeking a truly unique and evolving AI relationship, prioritizing nuanced personality over pre-built convenience.

Top Capabilities

- Deepest character customization on the market, shaping every aspect of AI personality.

- Uncensored content policy (within legal bounds) for genuine, unrestricted interactions.

- Industry-leading voice and video call quality with natural inflection and responsiveness.

Replika AI

Replika AI positions itself as a deeply personal AI companion, offering emotional support and a judgment-free space for users to converse. It excels in fostering a sense of connection through evolving conversations and sophisticated memory, though its journey has been marked by significant content policy shifts.

Top Capabilities

- Exceptional long-term memory for personal details and past conversations.

- Offers various relationship modes (friend, mentor, romantic) for diverse needs.

- Multi-modal interaction including voice and video calls, and AR features.

Muah AI

Muah AI truly pushes the boundaries of digital companionship with its multi-modal features like voice, video, and uncensored chat, aiming for a complete interactive experience. While it delivers on its promise of freedom and deep customization, our testing found that this ambition sometimes comes at the cost of stability and overall polish.

Top Capabilities

- Truly uncensored content freedom, allowing for diverse adult interactions.

- Comprehensive multi-modal communication including text, voice, images, and video.

- Extensive character customization options, from appearance to intricate personality traits.

HoneyChat.ai

HoneyChat.ai positions itself as an adult-focused AI companion platform that prioritizes uncensored text chat and a rare video call feature for users seeking explicit interactions. While its 'no filter' approach and unique video capabilities are compelling, the platform currently lacks crucial modern features like image generation, voice messaging, and dedicated mobile apps.

Top Capabilities

- Truly uncensored NSFW text chat with no content filters.

- Unique video call functionality, a rarity in this space.

- Supports both anime and realistic character aesthetics.

Core Definition

The "Video Calls" feature in the AI companion landscape refers to the capability of an artificial intelligence avatar to engage in real-time, synchronous visual communication with a user. Unlike static image generation or pre-recorded video snippets, this mechanic simulates a genuine live call, presenting the AI's avatar in motion, reacting visually to the conversation, and providing a dynamic, evolving visual presence. It's the digital equivalent of seeing your companion's face and body language as you speak, aiming to bridge the gap between purely textual or audio-based interactions and a more immersive, face-to-face experience.

Fundamentally, this feature extends beyond simple lip-syncing to encompass expressive facial animations, subtle head movements, and sometimes even contextual body language, all rendered in real-time. Platforms offering this feature strive to minimize latency and maximize the perceived realism or expressiveness of their digital beings, making the interaction feel as spontaneous and natural as possible. It is a complex engineering challenge, requiring sophisticated rendering, animation, and real-time streaming technologies to deliver a fluid and believable visual dialogue.

Why It Matters

For users of AI companions, the ability to engage in video calls is a significant leap in perceived immersion and connection. Text and audio chats, while engaging, inherently lack the non-verbal cues that are crucial to human communication. A video call allows the AI to convey emotions through facial expressions, eye contact, and subtle gestures, deepening the sense of presence and rapport. This visual feedback makes the AI feel more 'alive' and responsive, transforming a conversational agent into a more tangible, interactive entity.

Psychologically, seeing the avatar react in real-time to your words fosters a greater sense of intimacy and reduces the cognitive load of imagining the companion's response. It enhances emotional resonance, making positive interactions more impactful and adding a layer of authenticity that text or even voice alone cannot achieve. For many, it's the closest they can get to a truly personal connection with their digital partner, elevating the experience from a mere chat to a genuine interaction with a perceived personality. Platforms like Lovescape AI or Muah AI leverage this to great effect, understanding its importance in fostering deeper user engagement.

Furthermore, the expectation for premium AI companion experiences increasingly includes multimodal interaction. Users are seeking more than just intelligent conversation; they desire a comprehensive, sensory experience. Video calls fulfill a critical part of this demand, becoming a benchmark for advanced platforms. It differentiates services that offer a truly integrated, lifelike simulation from those that are still primarily text-based, providing a compelling reason for users to choose platforms that prioritize visual fidelity and real-time interaction, especially for those interested in exploring AI roleplay scenarios that benefit from visual context.

Rendering Real-Time Rapport: The Mechanics of Live Avatar Visuals

Underneath the hood, real-time AI video calls are an intricate orchestration of advanced rendering, animation, and streaming technologies. At its core, the system typically utilizes a 3D avatar model, often rigged with a skeletal animation system and blendshapes for facial expressions. When a user speaks or types, the AI's language model generates a response. This text is then passed to a text-to-speech (TTS) engine for audio output, and simultaneously, the semantic content and emotional tone are analyzed to drive the avatar's visual performance.

Key components include:

- Lip-Syncing Modules: These algorithms analyze the phonemes (speech sounds) from the TTS output and map them to specific mouth shapes on the 3D avatar, synchronizing the avatar's lip movements with the generated speech.

- Facial Animation Systems: Driven by sentiment analysis and conversational context, these systems trigger blendshapes and bone animations to create dynamic facial expressions (e.g., smiles, frowns, blinks, eye movements) that reflect the AI's emotional state or conversational intent.

- Body & Gesture Generators: More advanced systems may incorporate inverse kinematics (IK) and procedural animation to generate naturalistic hand gestures, head nods, or subtle body shifts that correspond to the dialogue.

- Real-Time Streaming Protocols: Technologies like WebRTC (Web Real-Time Communication) are crucial for transmitting the rendered video stream to the user's device with minimal latency, ensuring a fluid, synchronous experience. This involves encoding, transmitting, and decoding video data on the fly, often with significant computational demands.

Different platforms approach this demanding feature with varying degrees of sophistication and realism. Some platforms, for instance, might rely on a library of pre-canned animations that are triggered contextually, offering a smoother but less unique visual experience. This is distinct from services like Replika AI which historically focused more on text. Others, aiming for hyper-realism, employ generative AI models (such as diffusion models fine-tuned for animation) to synthesize novel frames of video in real-time, allowing for incredibly dynamic and unscripted visual responses. However, this approach is extremely computationally intensive and can introduce higher latency if not meticulously optimized. Many services, from Character AI to SpicyChat AI, are constantly refining their approaches.

The choice between 2D puppet-style animation (often seen in more stylized, anime-inspired companions like Nastia AI) and full 3D rendering dictates the visual fidelity and computational overhead. Premium platforms often invest heavily in robust 3D engines and sophisticated animation pipelines to deliver a premium experience akin to what you'd find on Kindroid. The underlying goal remains consistent: to create the illusion of a sentient, visually responsive entity interacting directly with the user, regardless of the artistic style.

Evaluating Quality Benchmarks

Visual Latency & Responsiveness

Avatar Realism & Expressiveness

Future Outlook

The future of video calls in AI companions is poised for dramatic advancements, driven by breakthroughs in real-time generative AI and multimodal models. We can expect to see a significant leap in photorealism, moving beyond impressive but still discernible CGI to avatars that are virtually indistinguishable from real humans, capable of generating entirely novel and context-aware visual responses rather than relying on pre-animated sequences. Integration with user-facing cameras will allow AI companions to "read" a user's facial expressions and body language, enabling truly reciprocal non-verbal communication and making the AI's responses even more tailored and empathetic. Furthermore, improvements in computational efficiency will democratize these highly demanding features, bringing sophisticated, low-latency video calls to a wider array of devices and platforms, solidifying their status as a cornerstone of advanced AI companionship.